Welcome to The Synthesis, a monthly column exploring the intersection of Artificial Intelligence and documentary practice. Co-authors shirin anlen and Kat Cizek will lay out ten (or so) key takeaways that synthesize the latest intelligence on synthetic media and AI tools—alongside their implications for nonfiction mediamaking. Balancing ethical, labor, and creative concerns, they will engage Documentary readers with interviews, analysis, and case studies. The Synthesis is part of an ongoing collaboration between the Co-Creation Studio at MIT’s Open Doc Lab and WITNESS.

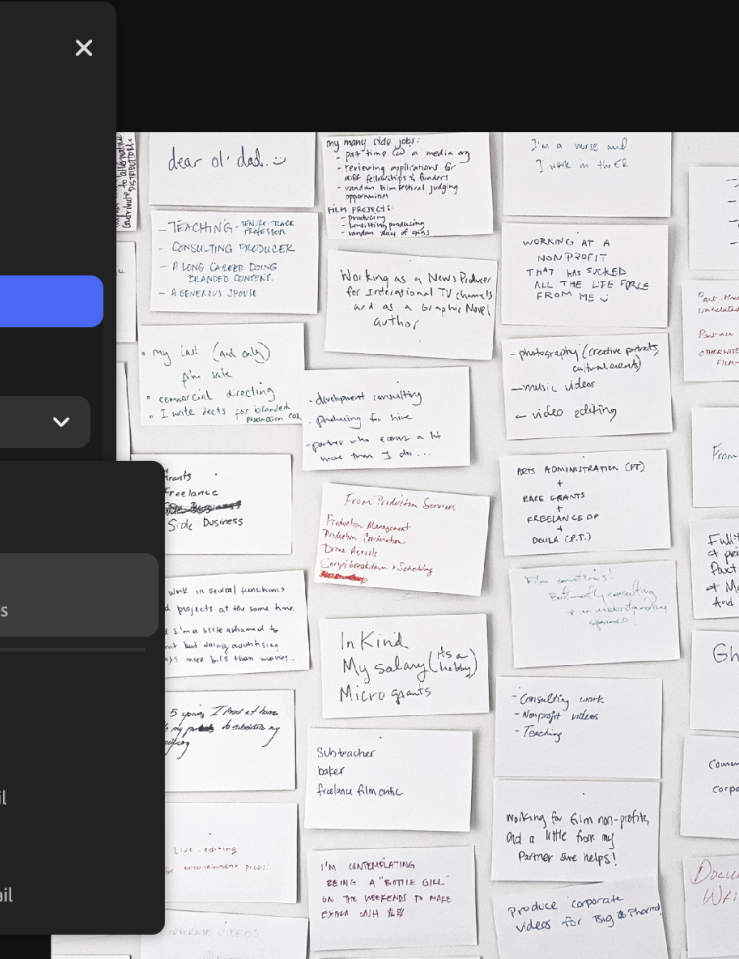

The documentary film editing process has always begun long before the first cut. It starts with long days (and sometimes months and years) of reviewing raw footage that must be screened, logged, and puzzled over. Meaning emerges unpredictably, slowly through attention to details and connections. But that foundational relationship is shifting.

Increasingly, filmmakers and editors are not meeting their footage directly. They are meeting it after and through AI.

A growing number of editing and asset-management platforms—including Adobe Premiere Pro, DaVinci Resolve, Riverside, Axle AI, and enterprise “media intelligence” systems used across unscripted television—now offer AI-assisted logging, transcription, tagging, clustering, summarization, facial recognition, scene detection, and semantic search. These tools promise relief from the tedious process and overwhelming scale of documentary material. But they can also introduce a quieter, less examined transformation: AI is becoming a gatekeeper to the editing process itself, when it’s deciding what is seen, in what order, and with what significance.

When AI filters and categorizes footage before a filmmaker ever presses play, it reshapes the field of possibility. Instead of exploring and wandering through material while following intuition, feelings of boredom, or curiosity, the filmmaker is guided along pre-structured pathways. Footage becomes searchable before it becomes experiential. Moments are labeled before they are felt. AI begins to define what becomes visible.

As these systems are currently designed, they tend to surface what is easily legible to machines: clear speech, recognizable faces, repeated patterns, and easily classifiable actions. Interviews may rise to the top. Clean audio is prioritized. Scenes that may align with common categories (such as “protest,” “family,” and “conflict”) are well organized and retrievable. It’s already common practice in the pipeline for reality TV series. It’s marketed as “media intelligence.”

But documentaries are often built on the tension of predefined assumptions and categories. The quiet hesitation. The in-between moments and off-camera remark. The ambiguous glance. The footage that doesn’t yet have a name. These are precisely the materials most likely to be flattened, miscategorized, or simply ignored by AI systems trained to detect the obvious. In this sense, AI doesn’t just help filmmakers find things faster; it subtly defines what counts as “findable.” And for that reason, we must pause to consider the consequences of this new type of workflow.

Traditionally, documentary editing has been a deeply bottom-up process. During the transition from analog to digital editing, many editors mourned not only the loss of physically handling film strips and even video cassettes, but also the loss of a tactile, embodied relationship to the footage itself. Nonetheless, even in digital editing systems, filmmakers immerse themselves in footage, allowing patterns and meanings to emerge over time as they “listen” to the footage. The unexpected connection between two unrelated, surprising moments is not a luxury in this process; it is often the engine of the entire creative process.

But when AI mediates that first encounter, the process risks becoming more top-down. Themes—often embedded in stereotypes—are suggested way too early. Clips are grouped into pre-existing categories. Summaries offer interpretations before the filmmaker has formed their own. The danger is not that AI tells a story, but that it narrows the range of stories that feel possible from the very moment the filmmaker first sits down with their footage.

If AI handles logging, transcription, and rough sorting, the editor’s role moves further upstream toward selecting, validating, and interpreting machine-generated structures.

This shifts and redefines the skills required for editing. If AI handles logging, transcription, and rough sorting, the editor’s role moves further upstream toward selecting, validating, and interpreting machine-generated structures. The craft of sitting patiently with footage, of noticing the unique edges, of dwelling in uncertainty, may become less central. In its place: prompt-writing, query refinement, and the ability to navigate algorithmic systems. These are not trivial skills. But they are different ones. And they carry different assumptions about how meaning is made. And over time, this could shape not just workflows, but aesthetics and politics.

If AI consistently surfaces certain kinds of material, the ones with clear narratives, structured interactions, and easily categorized events (essentially those that have been seen before), documentaries will amplify those biases. Stories might become more streamlined and more aligned with dominant patterns of recognition. Messiness, corkiness, ambiguity, and slowness—the hallmarks of many powerful documentaries—are already under pressure from commercial and platform-driven demands for speed, clarity, and efficiency. AI risks accelerating that trend.

Meanwhile, content that is easily indexed and interpretable by AI (e.g., public events, front-facing spoken testimony, visually distinct actions) will be more readily available to filmmakers under production deadlines and budget constraints. Meanwhile, quieter forms of documentation, such as domestic life, dynamic environments, embodied experience, moments that unfold without clear signals, may be underrepresented, not because they are less important, but because they are less visible and clear to the systems that now mediate access. Efficiency, in this context, is not neutral. Documentary practice has historically depended on time: time spent observing, revisiting, doubting, wandering, and living with footage long enough for unexpected meaning to emerge. AI systems optimized for speed inevitably reshape that relationship.

In documentary filmmaking, the story is not only in the footage. It is in the act of looking and engaging. And as AI becomes the one that looks first, we must ask: What is it teaching us to see, and what might we overlook as a result?

These are not inevitable futures. AI tools can be designed differently. They can surface uncertainty rather than hide it. They can expose their blind spots and allow filmmakers to toggle between machine-assisted and unfiltered views of their material. They can prioritize exploration, not just efficiency. That might mean designing systems that intentionally surface anomalies instead of only dominant patterns; interfaces that encourage accidental discovery rather than predictive sorting; or tools that make visible what remains unclassified, ambiguous, or unresolved within an archive. AI may ultimately play a valuable role in managing large volumes of footage, but it should be on the documentarians’ terms. Documentarians need to be at the helm of shaping the tools that shape the edit. Not the other way around.