Welcome to The Synthesis, a monthly column exploring the intersection of Artificial Intelligence and documentary practice. Co-authors shirin anlen and Kat Cizek will lay out ten (or so) key takeaways that synthesize the latest intelligence on synthetic media and AI tools—alongside their implications for nonfiction mediamaking. Balancing ethical, labor, and creative concerns, they will engage Documentary readers with interviews, analysis, and case studies. The Synthesis is part of an ongoing collaboration between the Co-Creation Studio at MIT’s Open Doc Lab and WITNESS.

In conversations about ethics in documentary practice, a common distinction is often made: AI used as an assistant versus as a creative tool. The implication is that assistance is neutral—organizational, technical, supportive—while creativity crosses into authorship and interpretation.

At first glance, this distinction feels sensible. AI can organize transcripts, tag archival footage, clean noisy audio, stabilize shaky video, or translate interviews. In these roles, it resembles that of a research assistant or post-production technician. It helps manage material; it doesn’t alter meaning.

But that boundary is eroding.

As enhancement systems grow more powerful and invisible, we are crossing a line from assistance into meaning-changing, from restoring what is there to generating what could plausibly be there. That shift demands our attention.

Enhancement Is Not Neutral

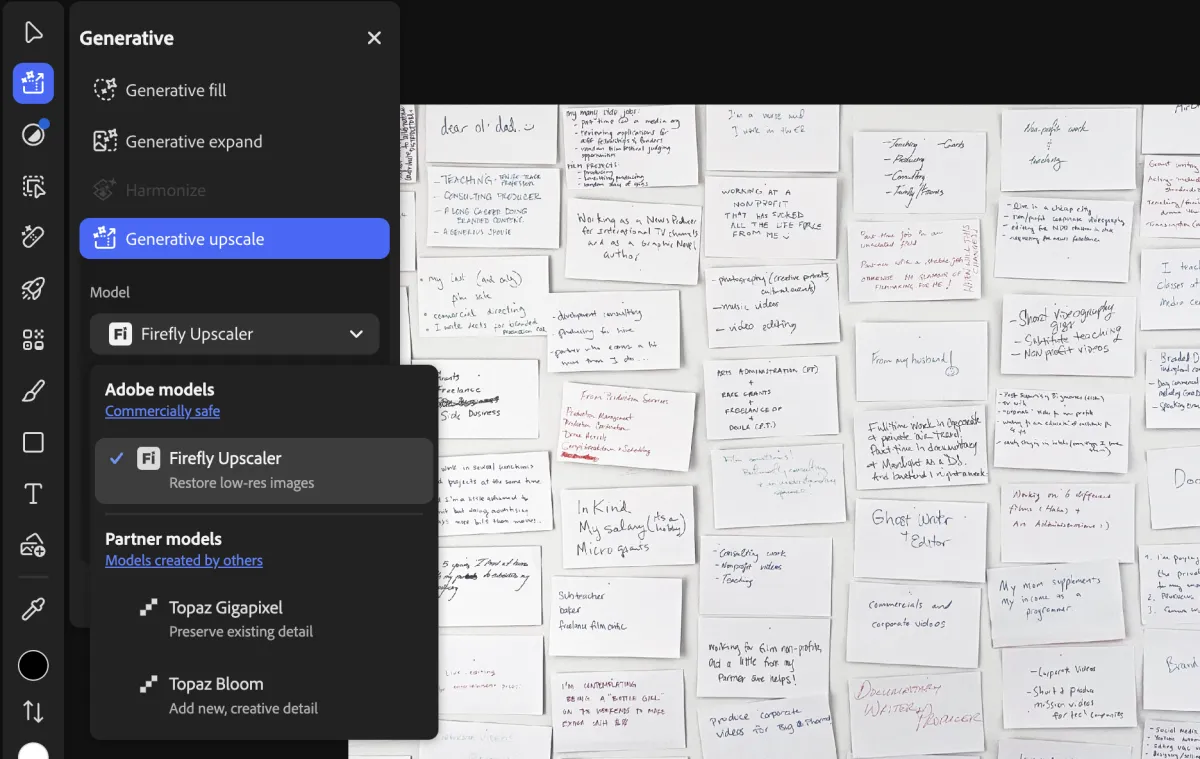

AI enhancement tools are now widely embedded across the media ecosystem: in dedicated software such as Topaz Video AI, in consumer apps, in cloud services, and increasingly, inside mainstream editing platforms like Adobe Photoshop and Premiere Pro. Often marketed as “up-rezzing,” “super-resolution,” or “AI restoration,” these tools promise to upscale resolution and “fill in” missing detail from poor source material. For filmmakers working with mixed formats (e.g., VHS home videos, compressed news clips, degraded CCTV, early digital footage), these systems can feel miraculous. Blurred images sharpen. Faces gain definition. Shadows acquire texture. But every pixel an AI system generates is not recovered; it is predicted.

These systems do not uncover hidden details embedded in an image. They generate new details based on statistical inference. Trained on millions of high-resolution images, the model learns what blurred patterns typically correspond to: what an eye “usually” looks like, how skin “normally” textures, how edges “tend” to resolve. Consider a CCTV frame capturing a person forty feet from the camera. The original data may contain only a few dozen pixels representing the face. When enhanced, the system may generate eyelashes, skin texture, and sharper jawlines, none of which the camera captured. The result appears clearer and more authoritative, but the added detail is synthetic. Therefore, enhancement does not retrieve lost truth; it reconstructs it. And when reconstruction is mistaken for recovery, probability is confused with evidence.

Even developers of enhancement software acknowledge this. Eric Yang, the CEO and co-founder of Topaz Labs, whose tools are widely used in documentary workflows, told us in an interview that enhancement does not “find” detail but synthesizes it, and cautioned about its use with forensic and evidentiary materials. The new information is produced. The distinction is critical.

These systems do not uncover hidden details embedded in an image. They generate new details based on statistical inference.

When Enhancement Alters Meaning

The consequences are no longer theoretical. In January, a widely circulated image purportedly showing a Minneapolis shooting turned out to be an AI-enhanced still generated from blurry bystander footage. What appeared to be a clear photographic capture of events was in fact a synthetic reconstruction. Limbs were distorted. Context shifted. The enhancement led some to believe that the object in Alex Pretti’s hand was a gun, when in reality it was a phone. The image’s persuasive power rested precisely on the detail that did not originate in the camera. It highlights the crisis point of what happens when enhancement becomes misrepresentation. Clarity carries weight. A sharper image may appear more truthful in volatile political contexts. But when that sharpness is synthetic, it alters interpretation.

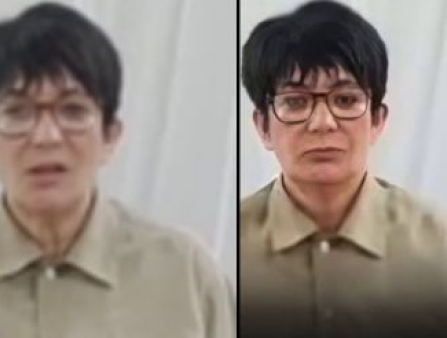

Another recent example involved an AI-upscaled image of Ghislaine Maxwell circulating on social media. The enhanced still, derived from a distant, low-quality deposition video, was compared to other photographs of Maxwell, with users claiming that differences in facial structure proved the person in the video was not her.

But enhancement systems often hallucinate detail. Moreover, facial appearance can vary dramatically depending on lens compression, viewing distance, and camera settings. In Maxwell’s case, according to leading media forensics expert Hany Farid, the apparent differences likely reflect a combination of synthetic detail generation and optical distortion. There is no credible evidence that the person in the video was not Maxwell. Yet enhancement fueled doubt and conspiracy theories, and the political consequences are obvious.

We saw a related dynamic play out recently in coverage of the U.S.-Israel-Iran war. A widely shared image amplified an AI-enhanced still frame, presenting it as clearer visual evidence of what had occurred. BBC Verify, in its live coverage, later contextualized the image as digitally altered or enhanced in ways that introduced misleading detail.

Even in less politically charged contexts, the risks are visible. Viewers recently reacted to AI-upscaled older television content (the ’80s sitcom A Different World) on Netflix, noting warped faces, gibberish texts, and unsettling artifacts, examples of systems prioritizing crispness over fidelity. Even if the images looked sharper, they didn’t look real.

Images taken from Hany Farid’s LinkedIn post.

What This Means for Documentary Politics and Practice

This is where politics and practice intersect.

Documentary has always grappled with reconstruction. The difference is that conventional reconstruction techniques, such as reenactments (as in The Act of Killing, 2012) or animation (such as Waltz With Bashir, 2008), foregrounded authorship; for the most part, they were clearly presented as the filmmakers’ version of events (even when revealed at the end of the work, such as in Stories We Tell, 2012). In AI-generated reconstruction, the made-up material is obscure and random.

Throughout history, in its best form, documentary reconstruction involved deliberate, documented choices. What to clean. What to crop. What to annotate. Those decisions were part of the film’s argument and ethics. They could be debated. AI automation blurs the line between recovery and generation, between damaged truth and plausible fiction. When the reconstruction process becomes invisible, so does accountability.

Not all pixels are equal. Filling in foliage is unlikely to pose a serious problem; reconstructing a face is far more consequential. We must ask: When does reconstruction become fabrication? When does improvement turn into reinterpretation?

We must treat AI enhancement as a reconstruction tool. As such, the documentary community should insist on:

- Transparent disclosure of when and how AI systems transform source material.

- Audit trails documenting enhancement processes, comparable to restoration logs.

- Clear ethical standards distinguishing restoration for legibility from reconstruction that alters meaning.

- Contextual framing when synthetic detail is introduced, especially in evidentiary or politically sensitive material.

The tools to “optimize” evidence are now widely accessible. That democratization (or ubiquity) is both powerful and destabilizing. We cannot pretend these systems are merely assistants. AI may rebuild missing frames. It may smooth noise and interpolate pixels. But when it fills gaps with statistically plausible invention, it is no longer assisting; it is crossing a line with dangerous consequences.